OpenGL Camera

Related Topics: OpenGL Transform, OpenGL Projection Matrix, Quaternion to Rotation Matrix

Download: OrbitCamera.zip, trackball.zip, cameraRotate.zip, cameraShift.zip

- Overview

- Camera LookAt

- Camera Rotation (Pitch, Yaw, Roll)

- Camera Shifting and Forwarding

- Trackball Camera

- Example: Orbit Camera

- Example: Trackball

Overview

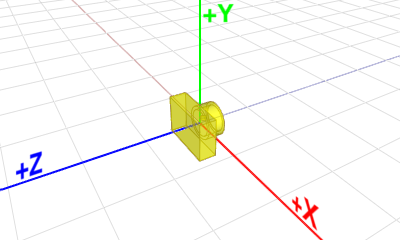

OpenGL doesn't explicitly define neither a camera object nor a specific matrix for the camera transformation. Instead, OpenGL transforms the entire scene (including the camera) inversely to a space, where a fixed camera is positioned at the origin (0,0,0) and always looking along -Z axis. This space is called eye space.

Because of this, OpenGL uses a single GL_MODELVIEW matrix for both object transformation to the world space and camera (view) transformation to the eye space.

You may break it down into 2 logical sub matrices;

![]()

That is, each object in a scene is transformed with its own Mmodel first, then the entire scene is transformed reversely with Mview. In this page, we will discuss only Mview for camera transformation in OpenGL.

LookAt

gluLookAt() is used to construct a viewing matrix where a camera is located at the eye position ![]() and looking at (or rotating to) the target point

and looking at (or rotating to) the target point ![]() . The eye position and target are defined in the world space. This section describes how to implement the viewing matrix equivalent to gluLookAt().

. The eye position and target are defined in the world space. This section describes how to implement the viewing matrix equivalent to gluLookAt().

Camera's lookAt transformation consists of 2 transformations; translating the whole scene inversely from the eye position to the origin (MT), and then rotating the scene with reverse orientation (MR), so the camera is positioned at the origin and facing to the -Z axis.

Suppose a camera is located at (2, 0, 3) and looking at (0, 0, 0) in world space. In order to construct the viewing matrix for this case, we need to translate the world to (-2, 0, -3) and rotate it about -33.7 degree along Y-axis. As a result, the virtual camera becomes facing to -Z axis at the origin.

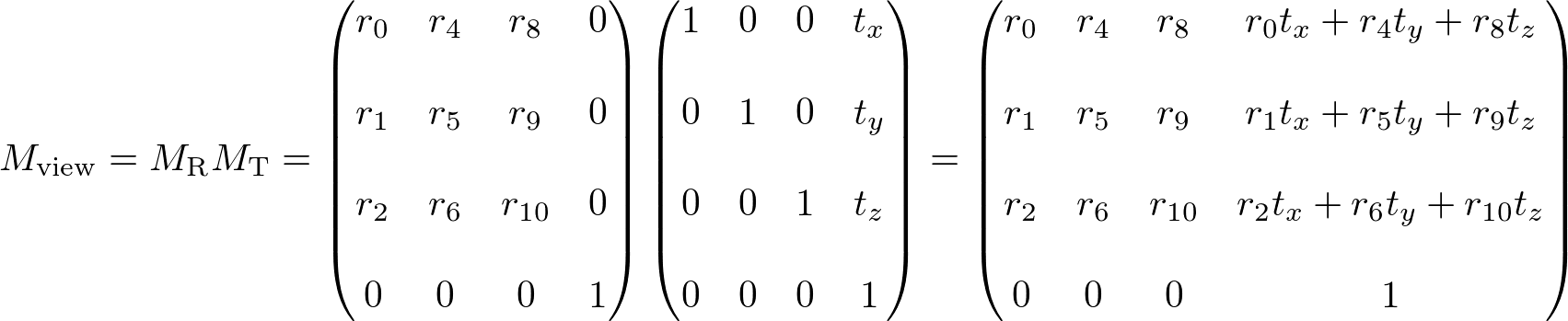

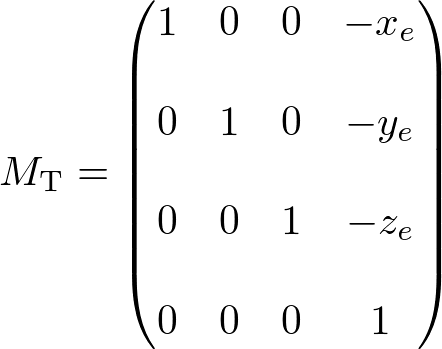

The translation part of lookAt is easy. You simply move the camera position to the origin. The translation matrix MT would be (replacing the 4th coloumn with the negated eye position);

The rotation part MR of lookAt is much harder than translation because you have to calculate 1st, 2nd and 3rd columns of the rotation matrix all together.

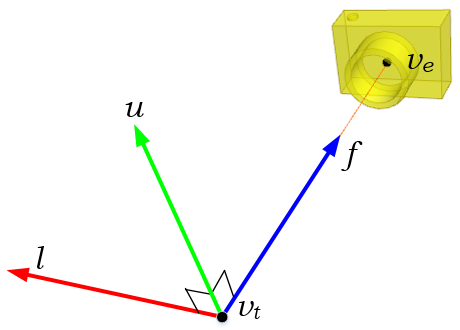

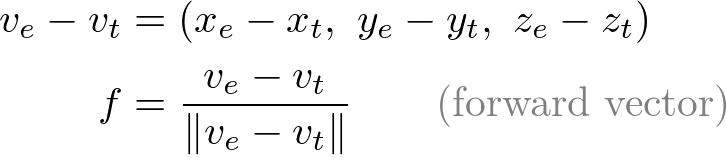

First, compute the normalized forward vector ![]() from the target position vt to the eye position ve of the rotation matrix. Note that the forward vector is from the target to the eye position, not eye to target because the scene is actually rotated, not the camera is.

from the target position vt to the eye position ve of the rotation matrix. Note that the forward vector is from the target to the eye position, not eye to target because the scene is actually rotated, not the camera is.

Second, compute the normalized left vector ![]() by performing cross product with a given camera's up vector. If the up vector is not provided, you may use (0, 1, 0) by assuming the camera is straight up to +Y axis.

by performing cross product with a given camera's up vector. If the up vector is not provided, you may use (0, 1, 0) by assuming the camera is straight up to +Y axis.

Finally, re-calculate the normalized up vector ![]() by doing cross product the forward and left vectors, so all 3 vectors are orthonormal (perpendicular and unit length). Note we do not normalize the up vector because the cross product of 2 perpendicular unit vectors also produces a unit vector.

by doing cross product the forward and left vectors, so all 3 vectors are orthonormal (perpendicular and unit length). Note we do not normalize the up vector because the cross product of 2 perpendicular unit vectors also produces a unit vector.

![]()

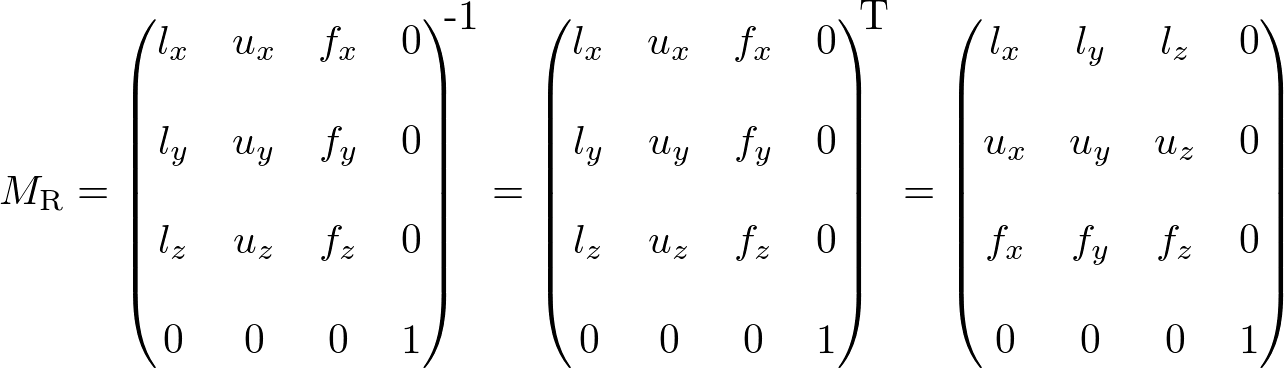

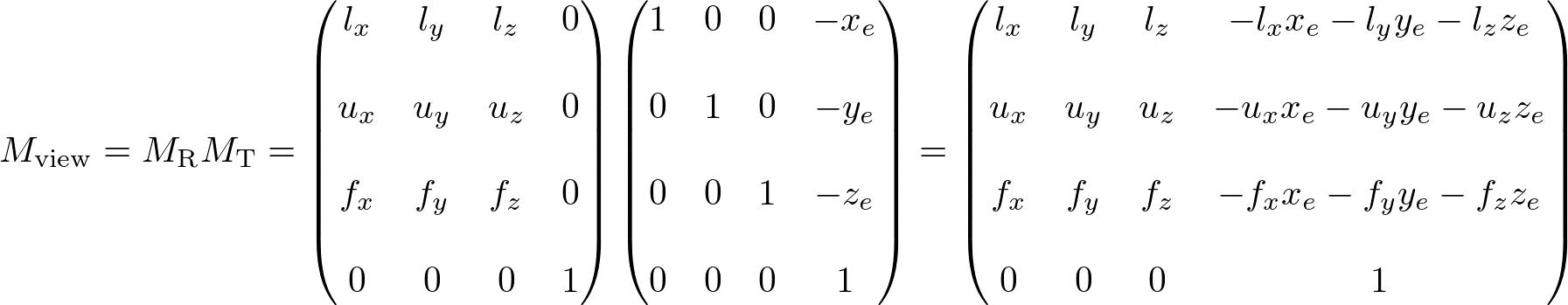

Therefore, the rotation part MR of lookAt is finding the rotation matrix from the target to the eye position, then invert it. And, the inverse matrix is equal to its transpose matrix because it is orthogonal which each column has unit length and perpendicular to the other column.

Finally, the view matrix for camera's lookAt transform is multiplying MT and MR together;

Here is C++ snippet to construct the view matrix for camera's lookAt transformation. Please see the details in OrbitCamera.cpp.

// dependency: Vector3 and Matrix4

struct Vector3

{

float x;

float y;

float z;

};

class Matrix4

{

float m[16];

}

///////////////////////////////////////////////////////////////////////////////

// equivalent to gluLookAt()

// It returns 4x4 matrix

///////////////////////////////////////////////////////////////////////////////

Matrix4 lookAt(Vector3& eye, Vector3& target, Vector3& upDir)

{

// compute the forward vector from target to eye

Vector3 forward = eye - target;

forward.normalize(); // make unit length

// compute the left vector

Vector3 left = upDir.cross(forward); // cross product

left.normalize();

// recompute the orthonormal up vector

Vector3 up = forward.cross(left); // cross product

// init 4x4 matrix

Matrix4 matrix;

matrix.identity();

// set rotation part, inverse rotation matrix: M^-1 = M^T for Euclidean transform

matrix[0] = left.x;

matrix[4] = left.y;

matrix[8] = left.z;

matrix[1] = up.x;

matrix[5] = up.y;

matrix[9] = up.z;

matrix[2] = forward.x;

matrix[6] = forward.y;

matrix[10]= forward.z;

// set translation part

matrix[12]= -left.x * eye.x - left.y * eye.y - left.z * eye.z;

matrix[13]= -up.x * eye.x - up.y * eye.y - up.z * eye.z;

matrix[14]= -forward.x * eye.x - forward.y * eye.y - forward.z * eye.z;

return matrix;

}

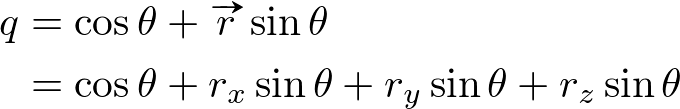

Camera Rotation (Pitch, Yaw, Roll)

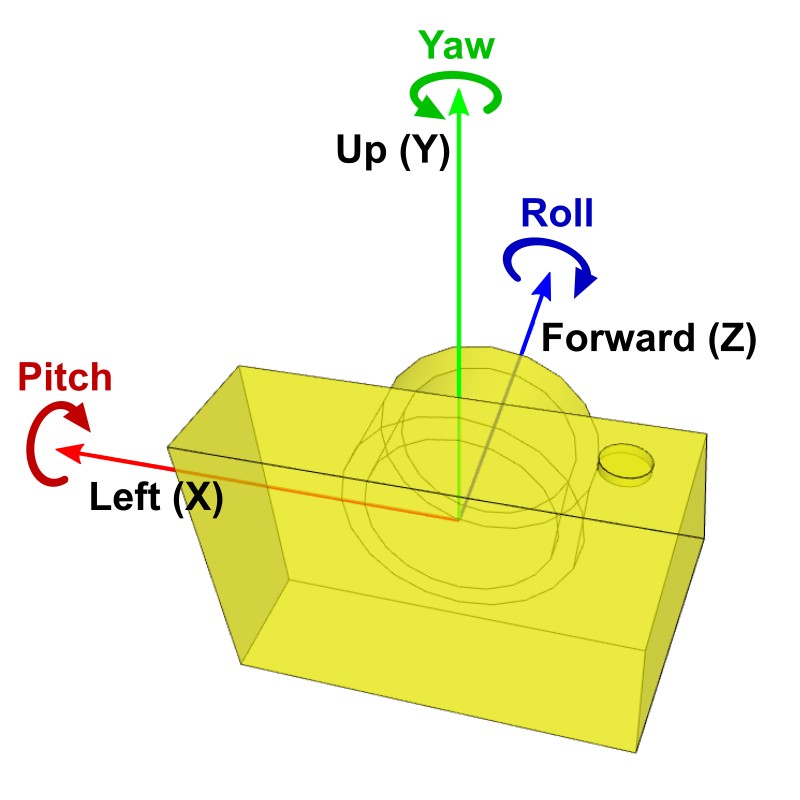

Other types of camera's rotations are pitch, yaw and roll rotating at the position of the camera. Pitch is rotating the camera up and down around the camera's local left axis (+X axis). Yaw is rotating left and right around the camera's local up axis (+Y axis). And, roll is rotating it around the camera's local forward axis (+Z axis).

It is used for a free-look style camera, VR headset movements or first-person shooter camera interface.

First, you need to move the whole scene inversely from the camera's position to the origin (0,0,0), same as lookAt() function. Then, rotate the scene along the rotation axis; pitch (X), yaw (Y) and roll (Z) independently.

If multiple rotations are applied, combine them sequentially. Note that a different order of rotations produces a different result. A commonly used rotation order is roll (Z) → yaw (Y) → pitch (X).

Keep it mind that the directions of the rotations (right-hand rule); a positive pitch angle is rotating the camera downward, a positive yaw angle means rotating the camera left, and a positive roll angle is rotating the camera clockwise.

The following is a C++ code snippet to construct the view matrix for the free-look style camera interface. Notice that only yaw (X) angle is negated but other pitch and roll angles are not. It is because the direction of Y axis on both the virtual camera and view matrix is same, and OpenGL will rotate the whole scene opposite direction. For instance, rotating the camera 30 degree to the left is same as rotating the world 30 degree to the right along Y-axis. However, the pitch (X) and roll (Z) angles are not negated because X and Z axis are already in opposite directions.

// camera position and rotations in world space

Vector3 camPosition = ...; // (x, y, z)

Vector3 camAngle = ...; // (pitch, yaw, roll)

// construct view matrix using camera pos and angles (for free-look style)

Matrix4 matrixView;

matrixView.translate(-camPosition); // 1. translate inversely

matrixView.rotateZ(camAngle.z); // 2. rotate for roll

matrixView.rotateY(-camAngle.y); // 3. rotate for yaw inversely

matrixView.rotateX(camAngle.x); // 4. rotate for pitch

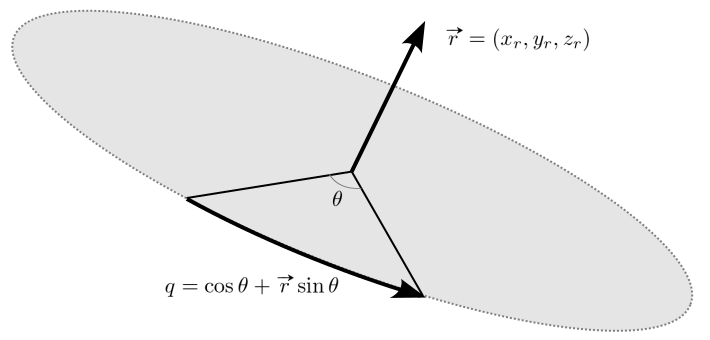

If Quaternion is used for the rotations, the pitch, yaw and roll angles can be converted to the quaternion representations from the rotation axis (X, Y and Z) and the angles amounts.

Then, multiply multiple quaternions one after another to construct a sequence of rotations together. Finally, the rotation quaternion is represented as 4x4 matrix form for OpenGL. The conversion is explained at Quaternion to Matrix.

// camera position and rotations in world space

Vector3 camPosition = ...; // (x, y, z)

Vector3 camAngle = ...; // (pitch, yaw, roll)

// construct rotation quaternions

Quaternion qx = Quaternion(Vector3(1,0,0), camAngle.x / 2); // pitch

Quaternion qy = Quaternion(Vector3(0,1,0), -camAngle.y / 2); // yaw

Quaternion qz = Quaternion(Vector3(0,0,1), camAngle.z / 2); // roll

Quaternion q = qx * qy * qz; // rotation order: roll -> yaw -> pitch

// convert quaternion to rotation matrix

Matrix4 matrixRotation = q.getMatrix();

// construct view matrix

matrixView.identity();

matrixView.translate(-camPosition);

matrixView = matrixRotation * matrixView; // M = Mr * Mt

Download cameraRotate.zip and cameraRotateQuaternion.zip to see the complete source code for free-look style camera rotations (pitch, yaw, roll).

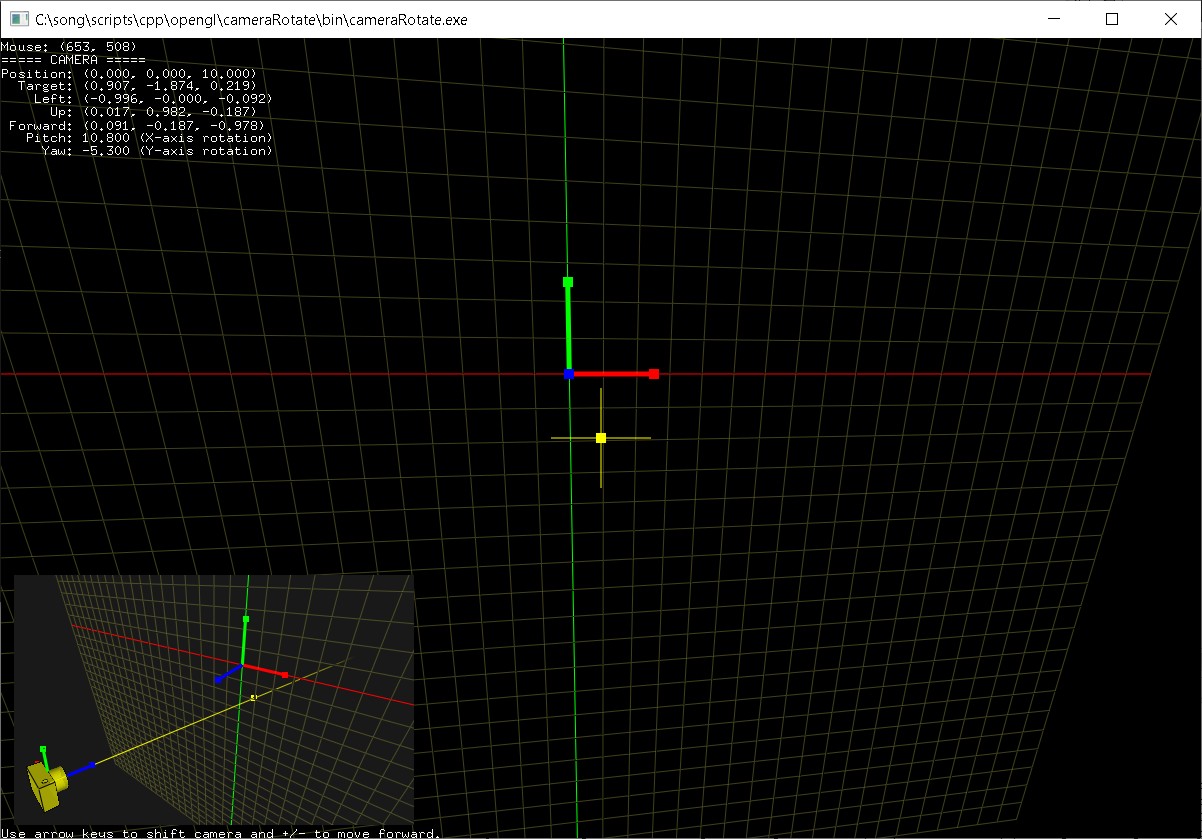

Camera Shifting & Forwarding

In order to update the camera's position by shifting the camera left and right or up and down, or moving forward and backward, you will need to find 3 local axis vectors of the camera; left, up, and forward vectors at the current position and orientation. These vectors cannot be same as X, Y and Z basis axis of the world space because the virtual camera has been oriented to an arbitary direction. (Moving left/right is not translating along X-axis in the world space.)

If you know the camera position and target points, you can compute the left, up and forward vectors from them by vector subtraction and cross product.

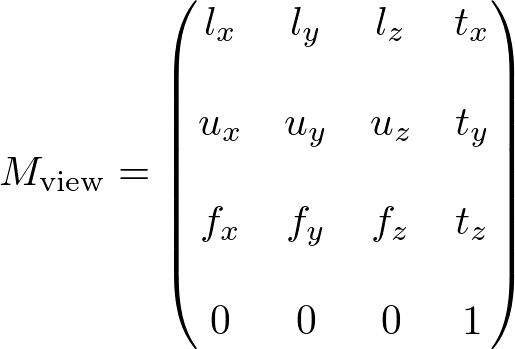

Or, you can also directly get them from the view matrx. The first row of the view matrix is the left vector, the second row is the up vector and the third row is the forward vector.

Since the rotation parts of the view matrix are inversely transformed (transposed), these 3 vectors become the row vectors, not column vectors. And, since the X and Z axis of the virual camera are opposite directions, we negate the left and forward vectors. (The Y axis is same in both world space and eye space, so no need to modify the up vector.)

Once we find the left, up and forward vectors, we can finally compute the new camera position in the world space by adding the delta movements along each direction vector. The following code shifting camera horizontal and vertical directions, and moving forward/backward;

Matrix4 matrixView ...; // current 4x4 view matrix

Vector3 camPosition = ...; // current pos (x, y, z)

float deltaLeft = ...; // delta movements

float deltaUp = ...;

float deltaForward = ...;

// left/up/forward vectors are 3 row vectors from the view matrix

// Left = Vector3(-m[0], -m[4], -m[8])

// Up = Vector3( m[1], m[5], m[9])

// Forward = Vector3(-m[2], -m[6], -m[10])

Vector3 cameraLeft(-matrixView[0], -matrixView[4], -matrixView[8]);

Vector3 cameraUp(matrixView[1], matrixView[5], matrixView[9]);

Vector3 cameraForward(-matrixView[2], -matrixView[6], -matrixView[10]);

// compute delta shift and forward amount

Vector3 deltaMovement = deltaLeft * cameraLeft; // add horizontal

deltaMovement += deltaUp * cameraUp; // add vertical

deltaMovement += deltaForward * cameraForward; // add forward

// update new camera's position by adding delta amount

cameraPosition += deltaMovement;

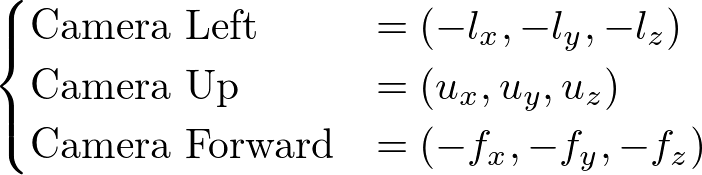

OrbitCamera.cpp class in cameraShift.zip provides various interfaces for the camera movements; moveTo(), shiftTo() and moveForward() to translate the virtual camera to any direction. For continuous animations, use startShift()/stopShift() and startForward()/stopForward().

Use the left/right arrow keys to shift the camera horizontally, and press up/down arrow keys to move the camera vertically. And press +/- keys to move the camera forward or backward.

Download: cameraShift.zip (Updated 2025-10-23)

Trackball Camera

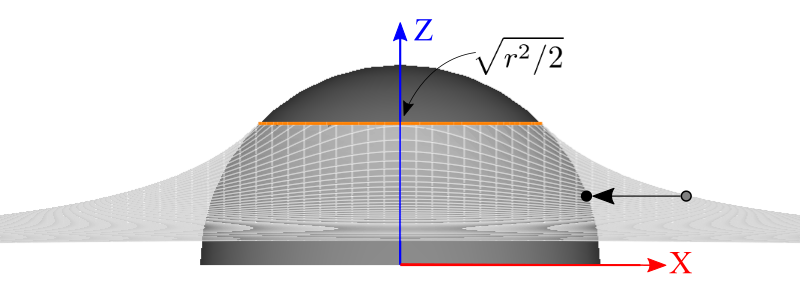

Another camera rotation interface is trackball camera. It creates a virtual trackball (sphere), which exactly fits in the screen, then projects the mouse cursor position (x, y) onto the trackball as (x, y, z). The z-value of the position on the trackball can be computed by the sphere equation.

![]()

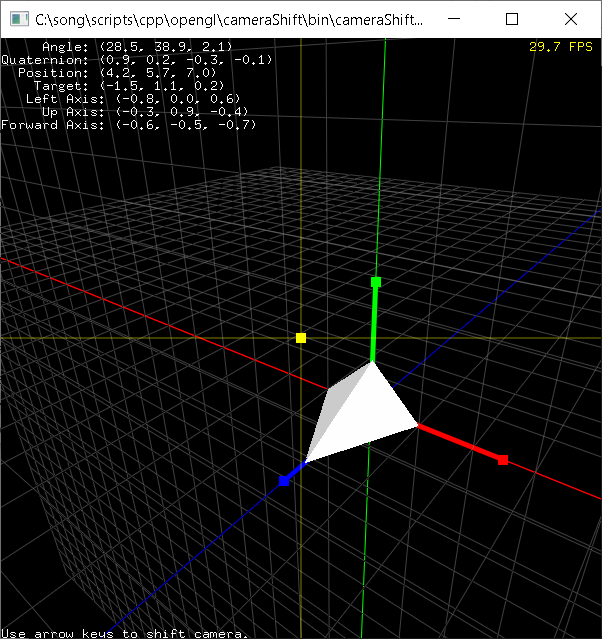

Once you find 2 points, v1 and v2 on the trackball, you can calculate the arc angle between these 2 points and the rotation axis by inner product and cross product of 2 vectors.

After you find the rotation axis r and angle θ, you can rotate the camera using either Rodrigues formula or Quaternion.

Since the virtual trackball is just fit inside the rectangular screen, some mouse positions cannot be projected on the trackball, for example, m3 point in the above diagram is completely out of the trackball. One solution is mapping the z-value of the mouse position using a parabola sheet, instead of using a sphere, if the distance from the center of the trackball to the mouse point is greater than  .

.

We re-map the z value of Parabola to Trackball's z value, if the mouse point is outside Trackball.

The parabola sheet equation to map the mouse point is;

This parabola sheet and sphere will intersect where the z value is ![]() .

.

If the distance ![]() from the center of the screen to the mouse position is greater than

from the center of the screen to the mouse position is greater than ![]() , first we find the z value of the parabola equation by inserting the mouse (x, y). It becomes the adjusted z value on the trackball (sphere). Then, we re-adjust the mouse position (x, y) value on the trackball using the following equation, so the mouse point can be re-mapped onto the surface of the trackball;

, first we find the z value of the parabola equation by inserting the mouse (x, y). It becomes the adjusted z value on the trackball (sphere). Then, we re-adjust the mouse position (x, y) value on the trackball using the following equation, so the mouse point can be re-mapped onto the surface of the trackball;

Please see Trackball.cpp class in the example below how the mouse position is actually mapped to the trackball in detail.

Reference: trackball.h / trackball.c by Gavin Bell at SGI.

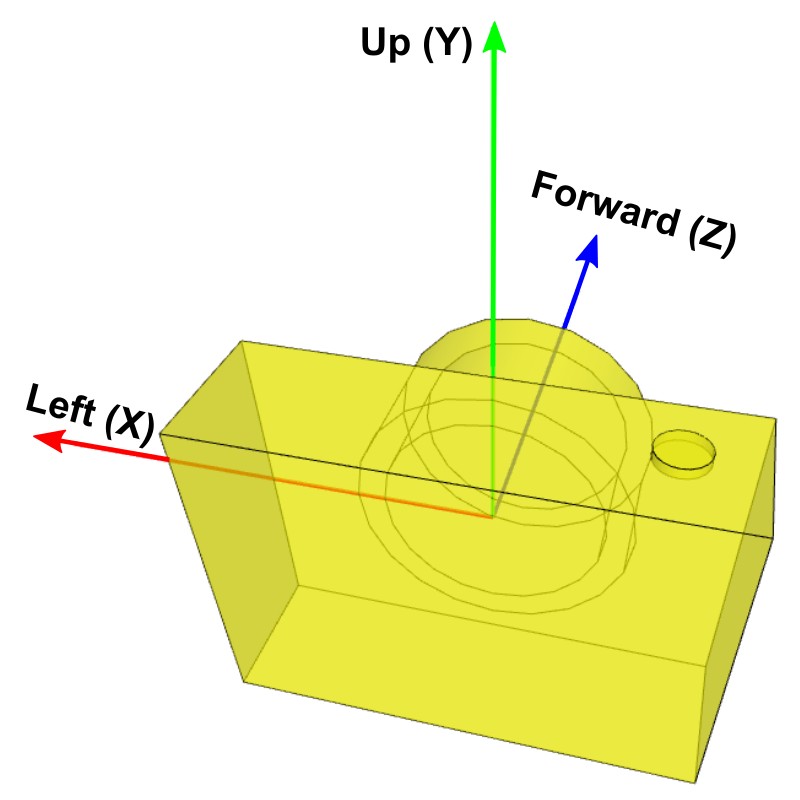

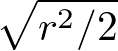

Example: OpenGL Orbit Camera

This GUI application visualizes various camera's transformations, such as lookat and rotations. Also, there is a WebGL JavaScript version of the orbit camera here.

Download source and binary:

(Updated: 2025-11-20)

![]() OrbitCamera.zip (includes MS VisualStudio 2022 project)

OrbitCamera.zip (includes MS VisualStudio 2022 project)

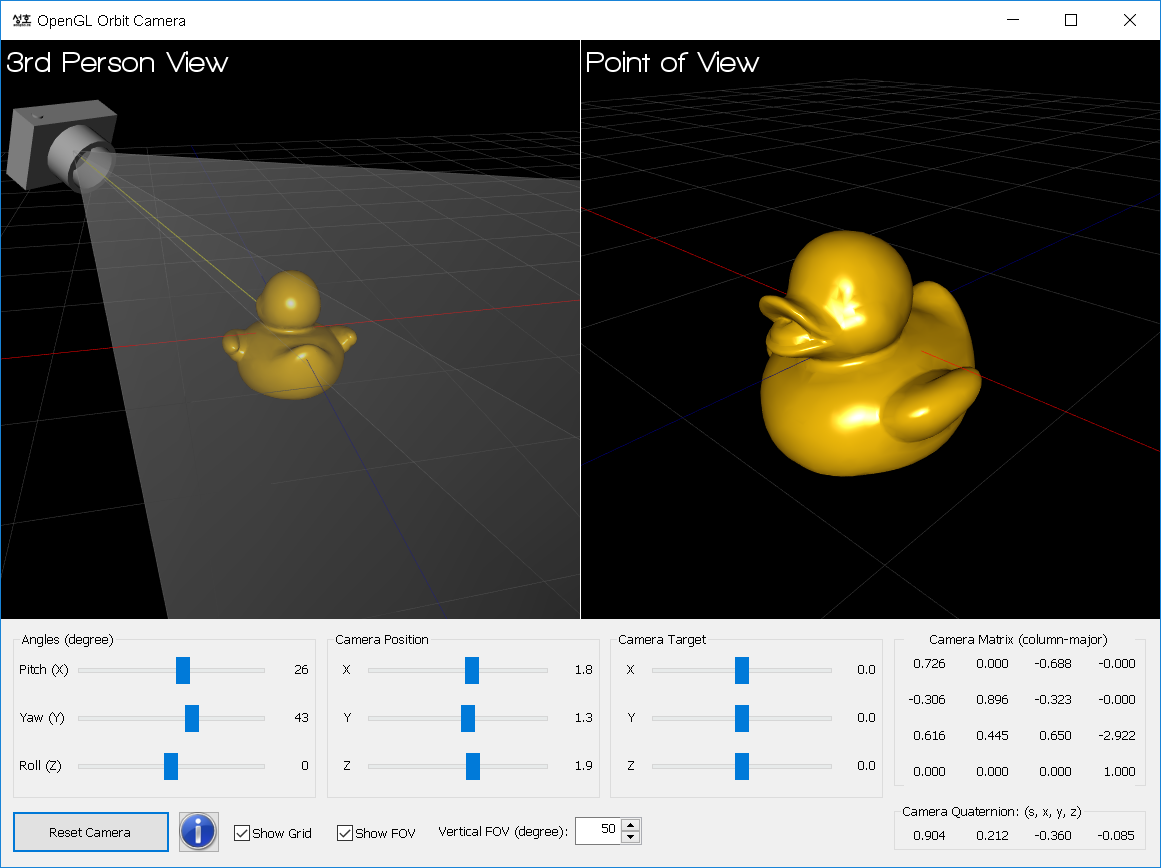

Example: Trackball

This demo application simulates an trackball style camera rotation by mapping the mouse cursor point of the screen space into a trackball (3D sphere) surface. It provides 2 different mapping modes; Arc and Project. See the detail implementation of C++ Trackball class and Quaternion.

Download: trackball.zip

(Updated 2025-10-23)

Reference: trackball.h / trackball.c by Gavin Bell at SGI.